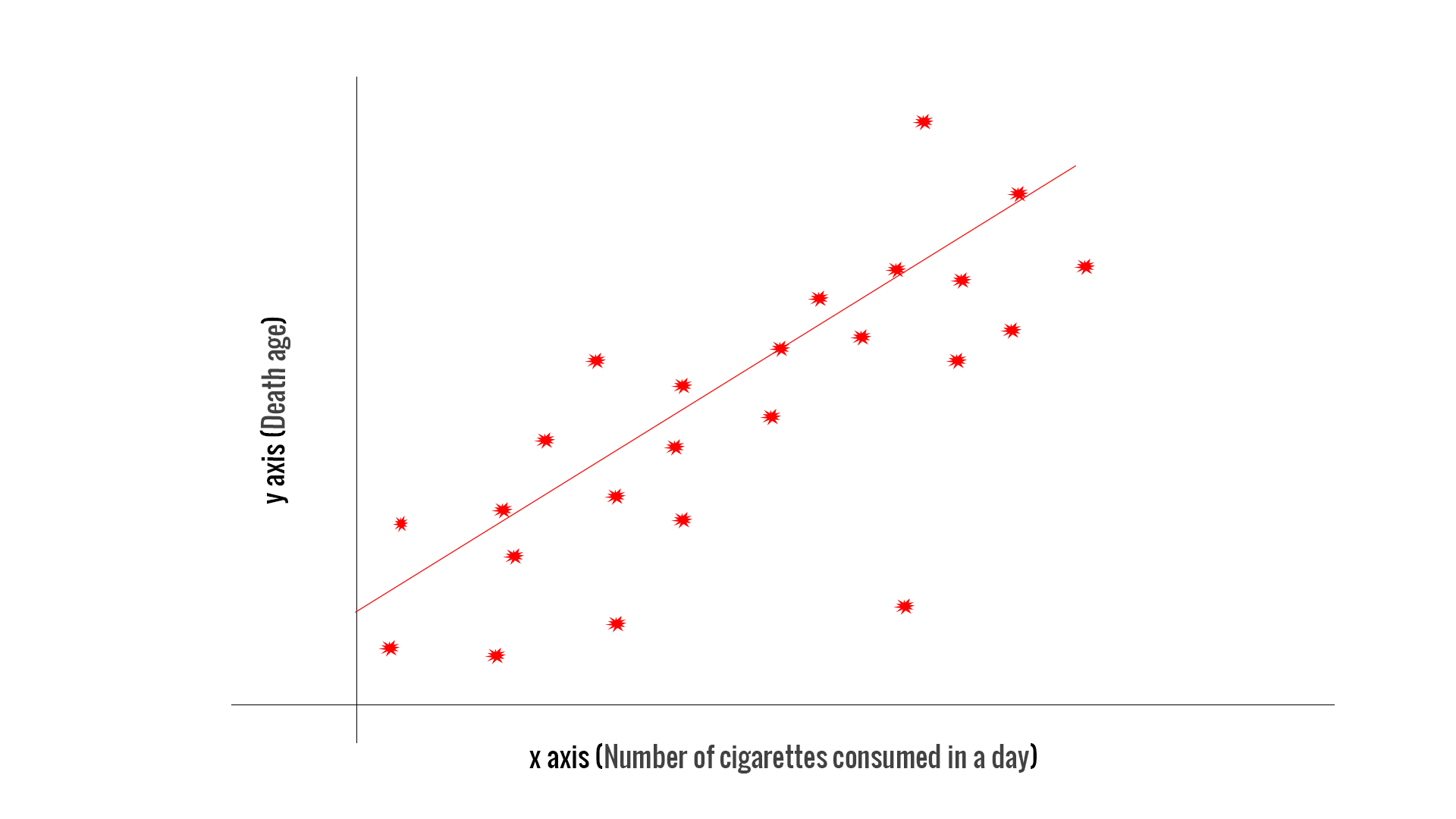

How then do we determine what to do? We'll explore this issue further in Lesson 6. RSS i1n (yi f(xi))2 i1n r2i R S S i 1 n ( y i f ( x i) ) 2 i 1 n r i 2. Residual sum of squares Residual sum of squares is defined as. It may well turn out that we would do better to omit either \(x_1\) or \(x_2\) from the model, but not both. Solving this system of linear equations is equivalent to solving the matrix equation AX C where X is the k × 1 column vector consisting of the b j, C the k × 1 column vector consisting of the constant terms, and A is the k × k matrix consisting of the coefficients of the b i terms in the above equations. Residual Residual: difference between measured and predicted value of response variable: ri yi f(xi) r i y i f ( x i). But, this doesn't necessarily mean that both \(x_1\) and \(x_2\) are not needed in a model with all the other predictors included. One test suggests \(x_1\) is not needed in a model with all the other predictors included, while the other test suggests \(x_2\) is not needed in a model with all the other predictors included. For example, suppose we apply two separate tests for two predictors, say \(x_1\) and \(x_2\), and both tests have high p-values.

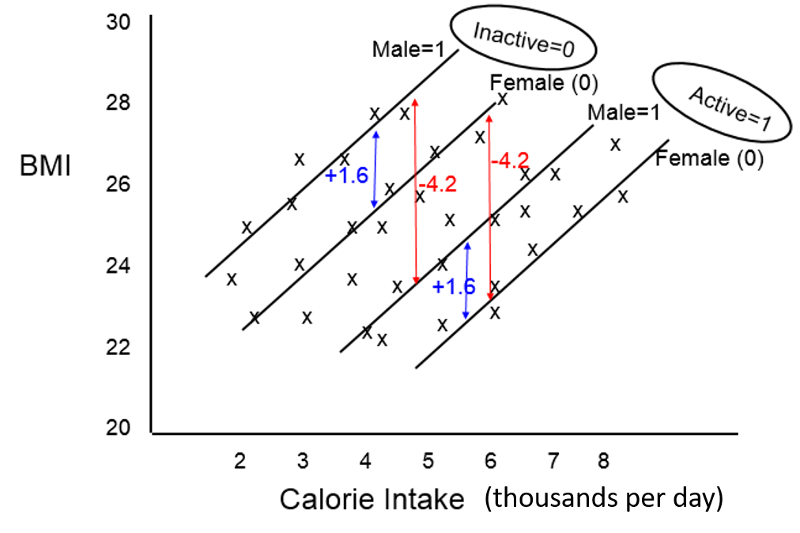

Multiple Hypothesis Testing and Meta-Analysis. Multiple linear regression, in contrast to simple linear regression, involves multiple predictors and so testing each variable can quickly become complicated. Calculate a linear least-squares regression for two sets of measurements. Note that the hypothesized value is usually just 0, so this portion of the formula is often omitted. A population model for a multiple linear regression model that relates a y-variable to p -1 x-variables is written as.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed